How a Leaked Signal Chat, DOGE, and the Dawn of AGI Revealed a Government Lost in Tech

A mistakenly leaked Signal chat, reckless cost-cutting in the DOGE initiative, and looming AGI challenges highlight the Trump administration's alarming inability to manage technology. As the stakes rise, can the U.S. government adapt in time?

Written by

Jason Lu

On March 24th, The Atlantic published an account by editor-in-chief Jeffrey Goldberg, who discovered that he had been mistakenly added to a Signal group chat used by senior officials in the Trump administration to coordinate military action against Houthi targets in Yemen.

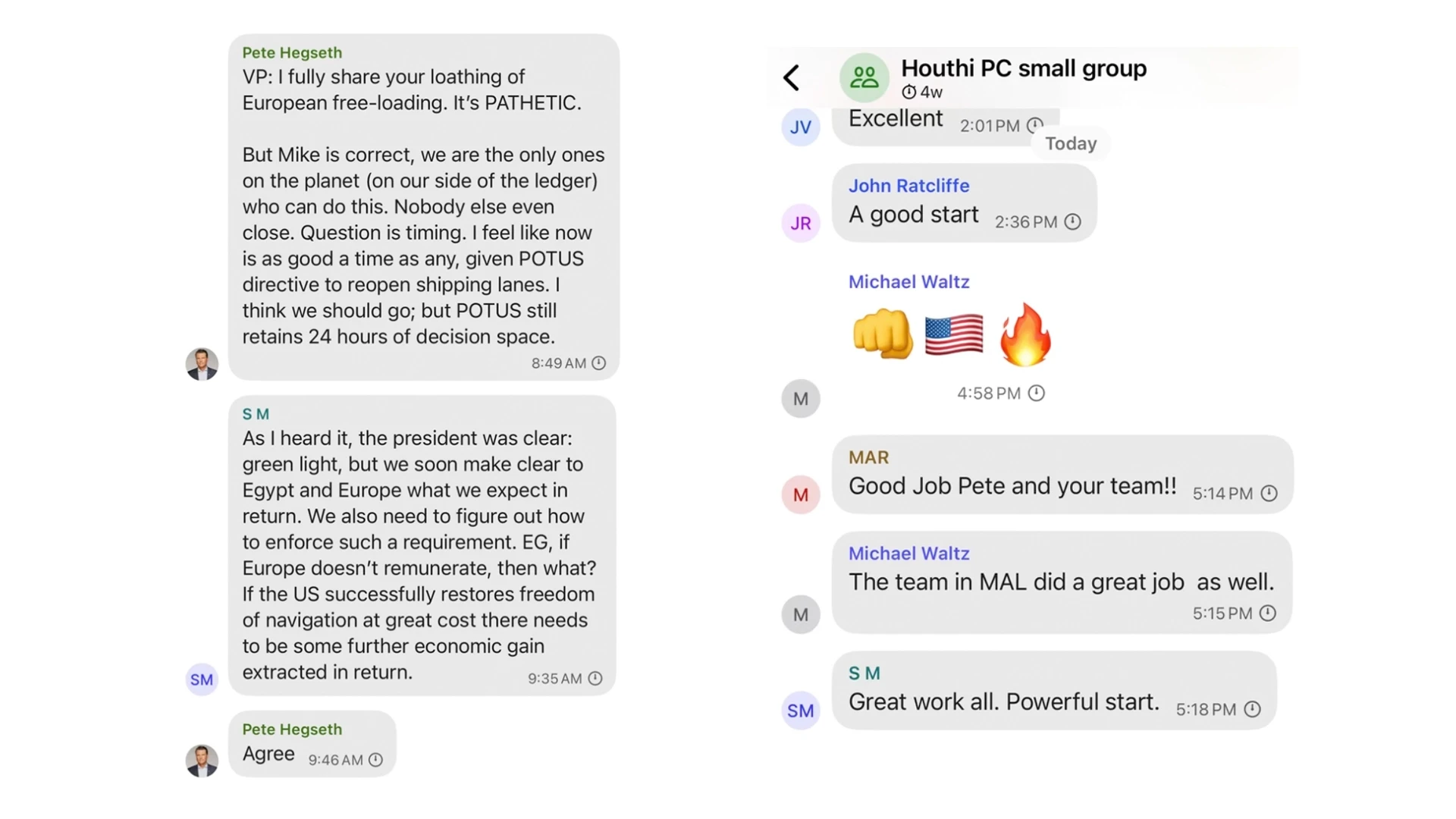

The group, titled “Houthi PC small group,” included messages from figures identified as Pete Hegseth, JD Vance, Marco Rubio, Tulsi Gabbard, and other national security principals. The conversation contained detailed discussions of policy, operational sequencing, and real-time planning. Goldberg remained a silent observer in the chat until the strikes began—then quietly left. No one noticed.

This scenario feels like satire, but the more you think about it, the less funny it gets. It wasn't just a tech mishap—it highlighted how parts of the government rely on casual, unsecured apps and sloppy procedures.

Convenience Over Security

The issue here isn't Signal itself. Signal is great for what it was built to do: private, encrypted conversations between journalists, activists, whistleblowers and everyday people wanting privacy. But it wasn't designed to manage sensitive, classified military operations.

Using Signal in government isn't entirely new. Officials commonly use it for quick messages, scheduling, or informal updates. But detailed military strategies—complete with timing and targets—are another matter entirely. Federal secure systems like SIPRNet, SCIFs and JWICS are specifically built for classified communication, with strict protocols, secure logging, and layered security checks. Using Signal bypasses all these protections, creating risks that no responsible system would tolerate.

But the Trump administration either didn't understand that or didn't care.

The real problem goes deeper: it's the growing assumption that easy-to-use consumer tools can replace structured, secure government processes. Sure, traditional systems can feel cumbersome. But that user unfriendliness exists for a reason—it prevents dangerous mistakes.

Government, But Make It a Startup

The Signal incident alone would be troubling enough—but it's not an isolated mistake.

Shortly before this, the Trump administration launched the Department of Government Efficiency—DOGE—an initiative aimed at making government run "like a startup," with Elon Musk serving as a senior advisor. While cutting unnecessary spending is good, aggressively slashing essential roles and oversight without assessing their value isn’t efficiency; it’s shortsightedness. For instance, the administration's decision to eliminate 90% of USAID’s foreign aid contracts—representing approximately $54 billion in cuts—had serious consequences, undermining vital humanitarian programs worldwide. These cuts jeopardized critical health services, including HIV treatment relied upon by millions globally. (And it does raise the question: Why is Musk more focused on tweeting memes and doing reckless cost-cutting than on fostering sustainable, responsible governance?)

DOGE's first public action? Launching a federal website with a misconfigued database that anyone could edit—no passwords, no security, no oversight. Developers quickly found the loophole and left behind pointed messages:

“This is a joke of a .gov site.”

“THESE ‘EXPERTS’ LEFT THEIR DATABASE OPEN.”

The messages stayed visible for hours.

These aren't isolated incidents—they point to a deeper issue. The people running critical government systems are either dangerously incompetent, careless, or both. Even someone like Elon Musk, who clearly understands technology, can still show a troubling disregard for responsible governance.

The issue isn't merely about technical skill—it's about judgment. Signal doesn't keep track of who sees what or effectively restrict sensitive conversations, and it certainly isn't built for classified discussions. DOGE didn't modernize anything either—it replaced reliable, secure systems with flashy shortcuts, like building its federal website with frameworks like Next.js and React, prioritizing quick launches over essential stability and security.

Why This Matters More Than Ever

All of this is unfolding right as we're entering one of the most significant technological shifts in history: artificial general intelligence (AGI). AGI refers to AI systems capable of performing almost any intellectual task a human can—potentially exceeding human capabilities in crucial areas.

Experts say AGI could emerge within the next decade, fundamentally reshaping national security, the economy, and governance at every level. Ben Buchanan, former special adviser for artificial intelligence in the Biden White House, captured the urgency of this moment clearly:

“I think the most exciting thing about AI is also the scariest, which is the pace of change.”

Now imagine this kind of reckless technological governance applied to powerful AI systems managing military operations, financial markets, or critical public services. Weak oversight and careless management wouldn't just result in embarrassing headlines—it could allow dangerous biases and catastrophic mistakes to slip by unnoticed until it's too late.

What's the Real Issue?

This isn't just about Signal, DOGE, or even AGI individually. It's about the widening gap between what responsible governance requires and what our leaders seem capable—or even interested—in managing.

We can't afford to keep acting as if technology alone can replace thoughtful, structured decision-making. Ignoring basic processes isn't innovation—it's negligence. And as these technological tools become vastly more powerful, the consequences of mishandling them multiply exponentially.

If government leaders can't even securely manage something as simple as a group chat, how can we trust them with the far bigger challenges ahead?